Here's this week's free edition of Platformer: a look at how Meta's newest AI model is rekindling the debate over whether open-source development can be as safe as more closed rivals like the ones from OpenAI and Google. We talked to Meta's chief product officer, Chris Cox. Do you value independent reporting on government and platforms? If so, consider upgrading your subscription today. We'll email you all our scoops first, like our recent one about the dismantling of the Stanford Internet Observatory. Plus you'll be able to discuss each today's edition with us in our chatty Discord server, and we’ll send you a link to read subscriber-only columns in the RSS reader of your choice.

Today, Meta released the largest ever open-source large language model to power generative artificial intelligence applications. Llama 3.1 represents an ambitious effort to shape the development of the AI industry in ways that favor Meta. It is also likely to spark new conversations around whether open source models are likely to generate more harm than their closed counterparts. In January I wrote about Meta’s unusual plan to make available for free a technology that it has spent more than a decade and tens of billions of dollars to build. Unlike its peers, including Google and OpenAI, Meta is not selling subscriptions to its AI for individuals or teams, and says it has no plans to do so. In the short term, Meta says that open-sourcing Llama helps its own systems get better more quickly and cheaply than they otherwise would. Inviting a global ecosystem of developers to iterate and improve on its models, and funnel those improvements back into Meta’s core systems, accelerates the development of Meta’s own products and systems and can save it money in doing so. In the long-term, owning one of the most powerful LLMs could provide the company with a basis for all manner of money-making products. It could also — and Meta lets this part go unsaid — thwart the development of competitors like OpenAI by giving away their core business for free. (One way to think of Llama 3.1 is that it is the free Google Docs to Microsoft’s paid Office 365.) To make that happen, of course, Meta’s AI needs to be among the best in its class. The company said today that it is making strides in that direction: Llama 3.1’s largest, 405-billion parameter model outperformed OpenAI’s GPT-4 Omni and Claude 3.5 Sonnet on some benchmarks, it said. “If you compare them to the GPT family, if you compare them to the Claude family, if you compare them to Gemini — I'll let the numbers speak for themselves,” Chris Cox, Meta’s chief privacy officer, said in an interview with Platformer. “But we're pretty proud now that some of them we’re beating, and then on some of them we’re just in range of best-in-class models. So that's really cool.” And while developers have only had it in their hands for a few hours, the early reception has been positive. On Hacker News, some developers found that Llama 3.1 performed at or near GPT-4o’s level, according to comments posted there. People with extremely high-end hardware may be able to host the largest Llama 3.1 model on their own hardware. But most developers will want to use a hosted service, and to that end today Meta announced partnerships with 25 companies that will make Llama 3.1 available to use through their cloud computing platforms, including Amazon Web Services, Google Cloud, Microsoft Azure, Databricks, and Dell. The fact that an open-source model now rivals closed alternatives speaks to the way that every major AI developer’s LLMs are converging on one another in quality. Seemingly every few weeks now, one of the big AI players releases a new model, or variation of a model, with slightly improved cost, performance, or other attributes. And for the most part, at least in my testing of the major players, the resulting products are mostly indistinguishable from one another. That’s bad news if you’re selling a subscription for $20 a month, as OpenAI, Google, and Anthropic all are. But it’s great news for Meta, which can expedite the development of its own systems and undercut its rivals at the same time, all without damaging its core advertising business. If there are risks to Meta here, they lie in the potential for regulation and damage to its reputation. That’s because open-source systems, by their nature, allow anyone to take them and repurpose them for their own ends, no matter what they might be. Fears that the open development of superhuman systems could create existential risk for humanity are among the reasons that OpenAI abandoned that approach in favor of a closed one. Over the past two years, open-source vs. closed development has become one of the most hotly debated issues in tech. The Biden administration has not definitively favored one approach over the other. But in its executive order last year, the administration did call for projects that use past a certain computing threshold to disclose that fact to the government and to perform safety testing. Some open-source advocates argue that the administration is laying the groundwork for further restrictions that will effectively outlaw open-source, and in so doing create a tiny cartel of vastly powerful AI companies that will reap most of the benefits of the technology. Among the voices making this argument is Marc Andreessen, the venture capitalist and longtime Meta board member. It was among the reasons that he and his business partner Ben Horowitz offered a full-throated endorsement of Donald Trump for president last week. Zuckerberg has reached a similar conclusion. In a kind of manifesto published today, Meta’s CEO argued that open source development is the best approach not only for Meta’s business, but for the world at large. He writes: It’s worth noting that unintentional harm covers the majority of concerns people have around AI – ranging from what influence AI systems will have on the billions of people who will use them to most of the truly catastrophic science fiction scenarios for humanity. On this front, open source should be significantly safer since the systems are more transparent and can be widely scrutinized. Historically, open source software has been more secure for this reason.

Zuckerberg also suggests that there are few harms that can be surfaced through AI today that you can’t already Google. “We must keep in mind that these models are trained by information that’s already on the internet, so the starting point when considering harm should be whether a model can facilitate more harm than information that can quickly be retrieved from Google or other search results,” he writes. These arguments seem sound enough today, when the models are barely capable of doing more than regurgitating their training data. It’s less clear how they will sound in a future, when open-source software can reason, scheme, and execute complex plans — no matter what those plans might be. And Meta is among the companies building toward that future. Cox told me that the next radical advancements in LLMs will come when they can master three skills: solving problems that require multiple steps; personalizing themselves to their users and developing a more complete sense of who their users are and what they need; and successfully taking action on your behalf online. Should LLMs develop those skills, and become part of the Llama of the future, Meta will surely attempt to put safeguards around it to prevent it from being misused. But the nature of open source technology is that the moment Llama 3.1 goes online, it is out of Meta’s hands. And where its rivals will retain broad power to shut down rogue systems, it’s less clear that Meta will be able to build a similar killswitch. Open source development has served Meta’s interests well so far, and the relatively benign AI tools we have today give us little reason to fear what Llama 3.1 can do. But it’s hard to make good policy for a technology that is improving in exponential leaps every few years. As AI grows more powerful, Meta’s case for open-source development will be worth revisiting. Governing- The Senate will vote on the Kids Online Safety Act this week, which will introduce privacy and content requirements for social media companies if made law. (Benjamin S. Weiss / Courthouse News Service)

- AI chatbots struggle to answer questions related to Trump, but AI companies’ partnerships with news publishers might help. (John Herrman / Intelligencer)

- Roblox, one of the biggest games for children, has a pedophile problem, according to this investigation. (Olivia Carville and Cecilia D’Anastasio / Bloomberg)

- Meta employees are reportedly debating Threads’s aversion to politics on the company’s internal forum, following the events in the past few weeks. Its head of product said she wasn't seeing any news of Biden dropping out of the race in her feed. (Kalley Huang and Sylvia Varnham O'Regan / The Information)

- TikTok Lite, a popular version of the app in the Global South, lacks AI safeguards, leaving users vulnerable to misinformation and deceptive content, a new report found. (Vittoria Elliott / WIRED)

- Malaysia and Singapore are extending government oversight of social media platforms, including Facebook, X, TikTok, and messaging apps like WhatsApp. They want to require a license for the apps to operate. (Norman Goh and Tsubasa Suruga / Nikkei Asia)

Industry- Alphabet hit its earnings expectations but missed on YouTube revenue, sending its stock slightly down. (Jennifer Elias / CNBC)

- A new AI model led by Google, NeuralGCM, can improve long-term weather forecasting, including climate trends and extreme weather events, researchers say. (Michael Peel / Financial Times)

- Google and cloud security firm Wiz’s $23 billion acquisition talks fell apart, with Wiz co-founder Assaf Rappaport telling employees the company will continue to pursue an IPO instead. (Rohan Goswami, Jennifer Elias and Jordan Novet / CNBC)

- OpenAI reportedly reassigned Aleksander Madry, who was in charge of the team assessing AI models for “catastrophic risks,” to a different role in its research organization. (Stephanie Palazzolo / The Information)

- TikTok is reportedly planning to launch its Shop platform in Ireland and Spain as early as October. (Zheping Huang and Olivia Poh / Bloomberg)

- A look at Nick Pickles, Linda Yaccarino’s right-hand man at X, and his rise through the ranks at the company. (Hannah Murphy / Financial Times)

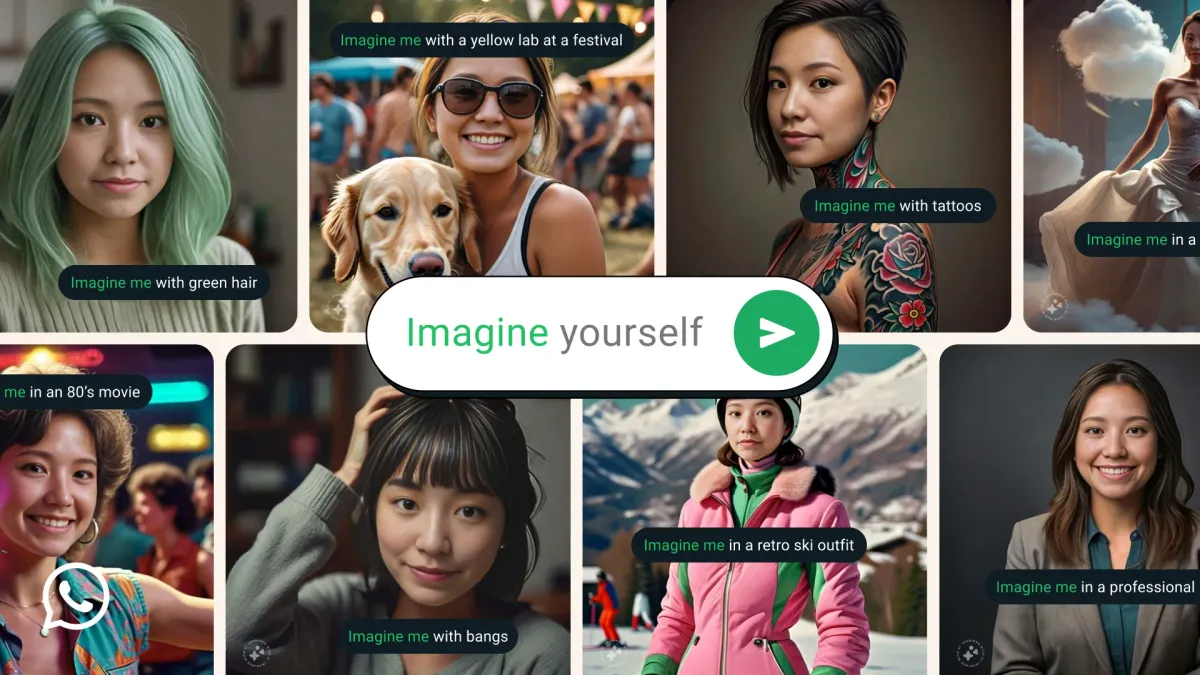

- Meta AI assistant is rolling out to Quest users in the US and Canada, replacing Voice Commands on Quest. (Mariella Moon / Engadget)

- Apple is reportedly working on a foldable iPhone that could be released as early as 2026. (Wayne Ma and Qianer Liu / The Information)

- Amazon’s new Prime Video update will allow users to see what’s included in a Prime membership and what costs extra, the company says. (Todd Spangler / Variety)

- Amazon is reportedly losing tens of billions of dollars from its devices business, including products like the Echo, Kindle, Fire TV sticks, and doorbells. (Dana Mattioli / Wall Street Journal)

- Spotify reported a record profit in the second quarter and strong growth in paying subscribers, sending its shares surging to its highest in over three years. (Ashley Carman / Bloomberg)

- Adobe is rolling out new generative AI features, powered by its latest Firefly Vector AI model, to Illustrator and Photoshop. (Jess Weatherbed / The Verge)

- Major gaming companies like Activision Blizzard are already using generative AI for game development amid mass layoffs, this investigation found. (Brian Merchant / WIRED)

Those good postsFor more good posts every day, follow Casey’s Instagram stories. (Link) (Link) (Link) Talk to usSend us tips, comments, questions, and open-source vulnerabilities: casey@platformer.news and zoe@platformer.news.

|